Co-Authors: Dana Yeo, Yebin, Tze Kiat

Contents

1.Introduction

Hi there, welcome to our Wikiblog!!! 🙂

In this wiki chapter, we hope to provide insightful content on how the study of manual gestures is related to language evolution. We will be referencing most of our information from “The Oxford Handbook of Language Evolution” edited by Tallerman and Gibson. Simply by exploring sign languages, there are many discoveries that can be found to help linguists piece the puzzle of the evolution of language. We will first start the content with gestures in primate communication and then slowly unveiling to gestures that people use in current times.

Here are our aims for this WikiChapter:

- Investigating gestures of primates in view of how it can related to the language evolution in human.

- Explaining about the origins of language in manual gestures.

- Exploring gestures in current times to find out more about evolution.

Continue reading to find out more!!!

2. Gesture as modality of primate communication

2.1 Why do we look at gestures in primates?

We can look to extant non-human primate communicate to help shape the discussion of gestures as a window to language evolution. As evolutionary linguistics focuses on biological adaptation for language, studying primates that share a common ancestor with humans might give us some understanding on how our language might have evolved. A gesture that occurs in these primates as well as humans has a high possibility that it is also present in our last common ancestor.

We can look to extant non-human primate communicate to help shape the discussion of gestures as a window to language evolution. As evolutionary linguistics focuses on biological adaptation for language, studying primates that share a common ancestor with humans might give us some understanding on how our language might have evolved. A gesture that occurs in these primates as well as humans has a high possibility that it is also present in our last common ancestor.

Though vocal modality seemed to be an innate complex communication for humans, studies have argued that hominin ancestors used manual gesture in a linguistic capacity prior to speech (Arbib, Liebal, & Pika, 2008). Hominins are a group consisting of modern humans, extinct members of humans’ lineage, and all our immediate ancestors. Thus, looking into manual gestures can be useful for the discussion of language evolution.

2.2 Manual Gestures in primates: Examples

Primates regularly use manual gestures, facial expressions and body posture for communication. For instance, chimpanzees and bonobos wave at each other, shake their wrists when impatient, beg for food with open hands held out, flex their fingers towards themselves when inviting contact, move an arm over a subordinate in a gesture of dominance (Pollick, Jeneson, & de Waal, 2008). We can all agree that humans ourselves use these actions to interact with one another too. However, as compared to their gestures, the sign language that humans use is much more complex as we are able to convey a lot more intricate messages through it.

2.3 Manual Gestures in primates: Lack of Variety

The simple manual gestures that primates use, lack the variety that is as wide as what our sign language systems have. For primates, a manual gesture can be used in different situations but carries entirely different meanings in each context. An example, a bonobo stretching out an open hand towards a third party during a fight signals a need for support, whereas the same gesture towards another bonobo with food signals a desire for a share (Pika, Liebal, Call & Tomasello, 2005). However, there is hardly such a case for sign languages. It is unlikely that they have actions that can be used in a particular context while at the same time conveying an entirely different meaning when the exact same actions is used in another context.

2.4 Manual Gestures in primates: Population-specific

Chimpanzees have population-specific communicative behaviours which is comparable to the cultural variations of gestures in humans. Some of the behaviours can only be found in specific areas. The chart above shows examples of population-specific communicative behaviours (Boesch, 1996).

Chimpanzees have population-specific communicative behaviours which is comparable to the cultural variations of gestures in humans. Some of the behaviours can only be found in specific areas. The chart above shows examples of population-specific communicative behaviours (Boesch, 1996).

Present in only Tai and Mahale, “Hand-clasp”: Two chimpanzees clasp hands overhead, grooming each other with the other hand.

Absent in only Bossou,”Play-start”: When initiating social play, one of the youngsters will break off a leafy twig or pick up some other objects, with this in mouth or hand he approaches his chosen playmate.

Similarly to humans, there are cultural variations of gestures. and gestures can mean very different things in different cultures. For instance,

This sign can mean “okay” in the Singapore and United States while in Greece, Spain or Brazil, it means you are calling someone an a**hole.

2.5 Manual Gestures in primates: Multimodal Signalling

Multimodal signalling is a combination of communicative signals such as vocalization, gestures and facial expression. This can be found in both humans and primates. Studies have shown that chimpanzees seem to combine gestures with vocalizations more often than gestures with facial signals, while bonobos exhibits no bias toward either a facial expression or a vocalization when combining their gestures.

For human, due to our anatomical changes, we use a lot more vocalisation now. Complex vocalisation is the main mode for communication while gestures and facial expressions are complements. Something that our closest relative, chimpanzees and bonobos are unable to develop.

From here, we can see how language have evolved along with our anatomical changes and how it has given us the ability to use all sorts of multimodal signals to express ourselves and even developing language systems like sign language which stems from manual gestures and the spoken language.

2.6 CONCLUSION: Linking back to Language Evolution…

By looking at all these similarities humans have with primates, this opens up to language evolutionary possibilities as to how manual gestures could have evolved into spoken languages and then to sign languages.

The Early Hominins could have started communication by using a language structure that was similar to manual gestures and as Hominins started to evolve biologically, so did the language systems.

3. The origins of language in manual gestures

3.1 Development

How did language evolve? As distinct systems of communication, there are two effective modes of language that are available to us, these being verbal language and manual gestures. Nowadays, humans habitually use the former to express their intentions, with the latter being a kind of supplement to get the message across. However, it seems likely that this was not always the case. Instead, manual gestures once held priority, with vocal elements only gradually coming to take centre stage later on in the evolution of humanity.

(cf. Corballis 2002; Armstrong and Wilcox2007; Rizzolatti and Sinigaglia 2008)

3.2 Intentionality

Intentionality is a philosophical concept and is defined by the Stanford Encyclopedia of Philosophy as “the power of minds to be about, to represent, or to stand for, things, properties and states of affairs”. Basically, just by virtue of focusing and directing your mind towards something, you have a directed intentionality towards it. With regard to language evolution, systems of language, whether verbal or gestural, are all intentional systems in themselves. As it is the mind from which the ability to communicate originates, it is by observing what living beings are prone to do in their natural environments which better enables us to grasp their innate capacity for language. For instance, attempts to teach great apes anything resembling human speech have failed (Hayes 1952), but reasonable success has been achieved in teaching them forms of visible language (Savage-Rumbaugh et al. 1998). This suggests that early hominins were much more predisposed to and thus more likely to have possessed an intentional system of communication that was based primarily on manual gestures and not vocal calls.

3.3 The power of time

Eventually, evolution came. Our brain sizes began to increase dramatically. Somewhere along the line, verbal language started to appear. We became able to share complex intentionalities and build complex systems. For verbal intentional systems, eyesight is no longer a limiting factor as is the case with manual gestures. Past limitations regarding communication that would correspondingly have vanished include the inability to communicate at night due to the darkness and over longer distances, where the other party is not within one’s view. With spoken words becoming the new priority, our ancestors would have been able to go from just miming to a more complex and useful intentional system. We became able to make tools and share thoughts on the past and future rather than just the present. This culminated in the fruits of our progress that we see today, even as our languages are constantly conventionalised or ‘updated’ over time, due to globalisation, necessity and the like. While such a process is much more apparent in radical, sweeping changes to language systems, subtle new phrases always still pop up.

4. Gesture as good venue for innovation

If the children are given instruction in how to solve the problems, they are more likely to profit from the instruction than children who were told now to gesture. Gesturing thus brings out implicit ideas, which in turn, can lead to learning. (Broaders et al, 2007)

We can even introduce new ideas into children’s cognitive repertories by telling them how to move their hands. For example, if we make children sweep their left hand under the left side of mathematical equation 3+6+4=__+4 and their right hand under the right side of the equation during instruction, they learn how to solve problems of this type. Moreover they are more likely to succeed on problems than children told to say ‘The way to solve the problem is to make one side of the problem equal to other side” (Cook et al, 2007).

Besides, according to Goldin-Meadow et al(2009), gesturing promotes new ideas. The children may be extracting meaning from the hand movements they are told to produce. If so, they are sensitive to the specific movements they produce and learn accordingly. Alternatively, all that matter is that the children are moving their hands. If so, they should learn regardless of which movements they produce. In fact, children who were told to produce movements instantiating a correct rendition of grouping strategy during instruction solved more problems correctly after instruction that children told to produce movements instantiating a partially correct strategy, and the latter group solved more problems correctly than children told not to gesture at all.

Therefore, manual modality is a good venue for innovation because ideas expressed in this modality may be less likely to be challenged than ideas expressed in more explicit and recognised oral modality. Because gesture is less monitored than speech, so may be more welcoming of fresh ideas than speech.

5. Gestures to Sign Language

5.1 From gestures to co-speech

The origin of human language has always been a debated topic. One hypothesis is that spoken language originally derived from gestures (Kendon, 2016; Armstorng, Stokoe & Wilcox, 1995 & Corballis, 2002). Gestures, a universal feature of human communication, functions as a visual language when verbal expression is temporally disrupted. It is often used for dyadic interactions. In fact, language areas in the brain, such as Broca’s area, are particularly active when observing gestural communication (Paulesu, Frith, & Frackowiak, 1993 & Zatorre et al., 1992). Thus, this is in line with Condillac’s (1746) suggestion that spoken language developed from gestures to speech.

However, it is worthy to note that spoken language does not evolve immediately from gestures. Rather, co-speech gestures (where speech is more vocalizations rather than actual speech here) sets in before spoken language. This was evident in early language acquisition in children where word comprehension and production occur after nine months of age whereas intentional control of the hands and babbling occur before eight months of age (Rochat, 1989; Iverson & Thelen, 1999 & Vauclair, 2004). Bernardis and Gentilucci (2006) also highlighted that sound production was incorporated into the gestural communicative system of male adults when interacting with objects or when meaning of abstract gestures were required. Sounds can provide more salience to the gestures when communicating information to other interlocutors. Thus, the use of gestures with sound confers an advantage in the creation of a richer vocabulary.

Furthermore, speech and gestures share common neural substrates. Not only was there a tendency to produce gestures at the same time as the spoken word, information conveyed verbally was also reduced as gestures supplement it, and vice-versa. As such, it is evident that vocalizing words and displaying symbolic gestures with the same meaning are controlled by a single communication system.

5.2 From co-speech gestures to spoken language

While it may seem that co-speech gestures provided a more enhanced communication system, co-speech gestures have limitations. Steklis and Harnad (1976) mentioned them as follows:

- Gestures are of no use in the dark, or across partitions

- Inability to refer to the absence of the referent or past / future

- Eyes and hands are occupied

- Slow and inefficient when

> Several people are communicating

>Crucial information is immediately required

These limitations could have led to increasing reliance on spoken language. Furthermore, it was emphasized that gestures were already somewhat arbitrary by this time. Thus, spoken language became the obvious solution to the above mentioned limitations.

Spoken language evolution is also closely linked to biological evolution. Fitch (2000) highlighted that control over vocalization was largely due to the modification of the vocal tract in two positions. A slow descent of the larynx to the adult position was seen from babies of three months of age is said to have an impact on vocalization (Sasaki et al., 1977). It allowed more room for the human tongue to move vertically and horizontally within the vocal tract (Lieberman et al., 1969). On the other hand, the importance of tongue and lips in constricting the airways also played a role in vocalization (Boe et al., 2017). Furthermore, increased brain complexity allowed creation of more complex meanings through combinations of sounds (Jackendoff, 2006). This developed into more complex linguistic elements such as lexicons and sentences.

5.3 Sign Language

Spoken language is natural and ubiquitous. But, how about people who are unable to communicate verbally or have hearing impairments? Some create their own language: a sign language.

According to StartASL.com (2017), Aristotle was the first to have a claim recorded about the deaf. He theorised that people can only learn through hearing spoken language. Deaf people were therefore disadvantaged and were unable to learn or be educated. This led to the first documentation of sign language in 15th century AD which was said to be French Sign Language (FSL). Then, different sign languages such as American Sign Language (ASL) began to emerge based on FSL because of the necessity for the language to communicate and educate deaf people. However, some sign languages emerged organically without being modeled on earlier sign languages such as Nicaraguan Sign Language (NSL).

Manual modality conveys information imagistically, and this information has an important role to play in both communication and thinking. It changes its form and itself becomes segmented and combinatorial. We see this phenomenon in conventional sign languages passed down from one generation to the next, but it is also found, and is particularly striking, in emerging sign languages.

Conventional sign languages are autonomous languages, independent of the spoken languages of hearing culture for deaf. Sign languages are combined to create larger whole sentences, and these signs are themselves composed of meaningful componenets (morphemes). Many signs and grammatical devices do not have an iconic relation to the meanings they represent. It was evident in a study conducted by Klima and Bellugi(1979), that the sign for ‘slow’ in American Sign Language(ASL) is made by moving one hand across the back of the other hand. When the sign is modified to be ‘very slow’, it is made more rapidly since this is the particular modification of movement associated with an intensificataion meaning in ASL.

6. What sign languages tell us about language evolution

Here are some insights on language evolution that can be gleaned from studying sign language, especially emerging sign languages.

6.1 Sign Language in general

Studying the fingerspelling development of deaf children can potentially explain how Hockett’s ‘duality of patterning’ feature arose. Duality of patterning is the ability to create meaningful units (utterances) from non-meaningful units (phones or individual sounds like /p/ in ‘pat’). Research shows that deaf children initially treat finger-spelt words as lexical items rather than a series of letters representing English orthography. They begin to link handshapes to English graphemes only at around age 3 (Humphries & MacDougall, 2000). However, this link is not based on phonological understanding of the alphabetical system. Rather, they view it as visual representations of a concept. This will be illustrated further using a fingerspelling experiment done with deaf children. In an experiment by Akamatsu (1985), some children produced correct handshapes (shape of the fingerspelled alphabet) but wrong spellings while others had correct spellings but wrong handshapes. In the former, the order of spelling is not important to them as long as all or most of the elements in a word are present (refer to Fig. 1 and 2). In the latter, the deaf children blend together letters from the manual alphabet instead of spelling them individually thus affecting the shape of the fingerspelled alphabet. Thus, he proposed that the children were analyzing fingerspelling as a complex sign rather than a combinations of letters. Only at about 6 years of age, when they started going to school, were they able to understand the rule that governs spelling. From this, we draw that humans do not naturally produce these alphabets or phones as a precursor to language use. In fact, it is the converse; we develop language first before realising that it could be broken down into smaller units. This could also hold true for language evolution. Long vocalisations could have come first. Then people realised that they could be broken up and recombined to form other meanings.

Fig.1: Fingerspelling of the word ‘Ice’

Fig.2: Wrong order of fingerspelling for the word ‘Ice’

6.2 Emerging Sign Languages

Research into emerging sign languages may give us a glimpse of how early human language could have evolved as we can closely observe the inception and development of a new language. It tells us why and how language could have evolved, given a fully developed human brain and physiology.

With this is mind, what then does emerging sign languages tell us about language evolution? First, there must be a community of people who communicate in that language. This community must consist of more than just a few people. A family unit is thus insufficient. Home signs can develop in a family of at least one deaf signer and other hearing members. But it will not evolve into a language. A larger group of users is needed for language to evolve. One reason for this is the need for at least two generations of signers for a rudimentary sign system to evolve into a language (Senghas, Senghas & Pyers, 2005). The first generation provides a shared symbolic environment, or vocabulary. The second generation then uses the signs created by the first generation in a more systematic manner and develop a grammatical system. Hence, we can postulate that a proto-language needed to have been used by a community of people for it to eventually develop into a full-blown language such as English. However, there is no known fixed number of speakers that ensures these rudimentary systems eventually evolve into languages.

Second, a shared vocabulary develops first before grammar. This is seen when observing first generation users of emerging sign languages. They have a strong tendency to use only one nominal in each sentence. For example, to convey ‘A girl feeds a woman’, first generation signers would instead sign ‘WOMAN SIT’, ‘GIRL FEED’. This can be attributed to the lack of grammar to differentiate between Subject (‘A girl’) and Object (‘a woman’) in the first generation (Meir, Sandler, Padden & Aronoff, 2010). Thus, language could be said to have developed word first and grammar develops slowly afterwards.

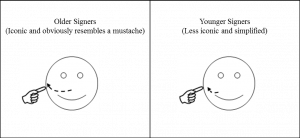

Third, words could have started as iconic and gradually become more arbitrary. This is seen in emerging sign languages. Younger signers (2nd generation onwards) simplify signs created by the first generation (Erard, 2005). For example, as shown in Fig 3., the sign ‘MAN’ among older Al-Sayyid Bedouin Sign Language (ABSL) signers is the gesture of a mustache twirl, presumably because men have mustaches. But for younger signers, it is merely twisting the index finger at the upper lip. Thus, signs that were initially iconic gestures start to lose certain characteristics that represent the physical objects they embody and become more arbitrary due to minimization of movement. This could also have happened to spoken languages where words may originally have been more onomatopoetic but were simplified by later generations until they bear little or no resemblance to the actual referents.

Fig. 3: Simplification of the sign for ‘MAN’ in ABSL

Moreover, the way and rate at which a language evolves may be influenced by the community. For example, NSL and ABSL are emerging sign languages. Yet, both have markedly different grammars. NSL, similar to more developed sign languages, has a rich inflection system which reduces its reliance on a strict word order (Erard, 2005). An example of inflections in NSL is how signing two actions slightly to the side instead of forward indicates that the same person is doing both actions (Senghas, Senghas & Pyers, 2005). Conversely, ABSL does not have rich inflections and instead relies on strict word order to avoid ambiguity (Fox, 2007). Some attribute this to the nature of the two communities. NSL is considered to be a deaf community sign language whereas ABSL is a village sign language. This means that ABSL developed among people of the same village. Whereas NSL developed among a group of people who lived in different areas but were brought together for some reason, usually for education. Thus, NSL has a bigger, more diverse community than ABSL and also has more members joining the community each year. This may be the cause of NSL’s more accelerated grammatical development. Interestingly, while all emerging sign languages are developing syntax, there is no single path to its development. ABSL relies heavily on word order while Israeli Sign Language (ISL) is developing verb agreement and relies less on word order. Despite being emerging sign languages, both are not developing the same areas of syntax or grammar. Conversely, they are going in totally opposite directions with regards to word order and morphology. This could imply that different languages evolved differently from the very start.

7. Conclusion

Up till today, the debate over the origins of human language is still highly contentious. The theory that we were exploring in this WikiChapter is one that hypothesised that language evolved from gestures. As humans evolve, spoken language was created and replaced gestures slowly. Languages in current times are far more efficient as compared to the early days. By investigating the manual gestures that primates used, the origins of language in manual gesture, to finally how sign languages are developed and made so much more complex in modern days, we managed to unveil how gestures can be a window to language evolution.

With that, we hope that our wikichapter has helped you to understand language evolution better! 🙂

References

Akamatsu, C. (1985). Fingerspelling formulae: A word is more or less than the sum of its letters. In W.Stokoe & V.Volterra (Eds.), SLR’83: Sign language research (pp.126-132). Silver Spring, MD: Linstok Press.

Arbib, M. A., Liebal, K., & Pika, S. (2008). Primate vocalization, gesture, and the evolution of human language. Current Anthropology 49: 1053–1076.

Armstrong, D. F., Stokoe, W. C. & Wilcox, S.E. (1995). Gesture and the nature of language. Cambridge, UK: Cambridge University Press

Armstrong, D. F., & Wilcox, S. E. (2007). The gestural origin of language. New York: Oxford University Press.

Bernardis P. & Gentilucci, M. (2006). Speech and gesture share the same communication system. Neuropsychologia, 44, 178-190

Boë, L. J., Berthommier, F., Legou, T., Captier, G., Kemp, C., Sawallis, T. R., Becker, Y, Rey, A. & Fagot, J. (2017). Evidence of a Vocalic Proto-System in the Baboon (Papio papio) Suggests Pre-Hominin Speech Precursors. PloS one, 12(1), e0169321.

Boesch, C. (1996). The emergence of cultures among wild chimpanzees. In W. G. Runciman, J. M. Smith, & R. I. M. Dunbar (Eds.), Proceedings of The British Academy, Vol. 88. Evolution of social behaviour patterns in primates and man (pp. 251-268). New York, NY, US: Oxford University Press.

Clough S & Hilverman C (2018) Hand Gestures and How They Help Children Learn. Front. Young Minds. 6:29.

Clough, Sharice & Hilverman, Caitlin (2018). Can Gestures Show us When Children are Ready to Learn?. In Haaland, Kathleen. Hand Gestures and How They Help Children Learn.

Corballis, M.C. (2002). From hand to mouth: The origins of language. Princeton, NJ: Princeton University Press

De Waal, F. B. M., & Pollick, A. S. (2011). Gesture as the most flexible modality of primate communication. In M. Tallerman., & K. R. Gibson (Ed.), The Oxford Handbook of Language Evolution (pp. 82–89). New York: Oxford University Press.

Erard, M. (2005). A language is born. New Scientist, 188(2522), 46-49.

Fitch, W. T. (2000). The evolution of speech: a comparative review. Trends in cognitive sciences, 4(7), 258-267.

Fox, M. (2007). VILLAGE OF THE DEAF. Discover, 28(7), 66.

Hayes, K. J., & Hayes, C. (1952). Imitation in a home-raised chimpanzee. Journal of Comparative and Physiological Psychology, 45(5), 450-459. doi:10.1037/h0053609

Humphries, T., and F. MacDougall (2000). ‘‘Chaining’’ and Other Links: Making Connections between American Sign Language and English in Two Types of School Settings. Visual Anthropology Review, 15(2), 84-94.

Iverson, J. M., & Thelen, E. (1999). Hand, mouth, and brain: The dynamic emergence of speech and gesture. Journal of Consciousness Studies, 6, 19–40.

Jackendoff, R. (2006). How did language begin?. Linguistic Society of America.

Jacob, Pierre, “Intentionality”, The Stanford Encyclopedia of Philosophy (Spring 2019 Edition), Edward N. Zalta (ed.), URL = <https://plato.stanford.edu/archives/spr2019/entries/intentionality/>.

Kendon, A. (2016). Reflections on the “gesture-first” hypothesis of language origins. Psychonomic Bulletin & Review, 1-8.

Lieberman, P. et al. (1969) Vocal tract limitations on the vowel repertoires of rhesus monkeys and other nonhuman primates. Science, 164 (3884), 1185–1187

Meir, I., Sandler, W., Padden, C., & Aronoff, M. (2010). Emerging Sign Languages. The Oxford Handbook of Deaf Studies, Language, and Education,2. doi:10.1093/oxfordhb/9780195390032.013.0018

Paulesu, E., Frith, C. D., & Frackowiak, R. S. (1993). The neural correlates of the verbal component of working memory. Nature, 362(6418), 342–345.

Pollick, A. S., Jeneson, A., & de Waal, F. B. M. (2008). Gestures and multimodal signalling in bonobos. In T. Furuichi and J. Thompson (eds.), The bonobos: Behavior, ecology, and conservation. New York: Springer, 75–94.

Pika, S., Liebal, K., Call, J., & Tomasello, M. (2005). The gestural communication of apes. Gesture 5: 41–56.

Rizzolatti, G., & Sinigaglia, C. (2008). Mirrors in the Brain: How Our Minds Share Actions and Emotions. Oxford: Oxford University Press.

Rochat, P. (1989). Object manipulations and exploration in 2- to 5-month-old infants. Developmental Psychology, 25, 871–884.

Sasaki, C.T. et al. (1977) Postnatal descent of the epiglottis in man. Arch. Otolaryngol, 103, 169–171

Savage-Rumbaugh, S., Taylor, T. J., & Shanker, S. (1998). Apes, language, and the human mind. New York: Oxford University Press.

Senghas, R. J., Senghas, A. & Pyers, J. E. (2004). The emergence of Nicaraguan Sign Language: Questions of development, acquisition and evolution. In J. Langer, S. T. Parker , & C. Milbrath (Eds), Biology and knowledge revisited: From neurogenesis to psychogenesis (pp. 287 – 306). Mahwah, NJ: Lawrence Erlbaum Associates.

Steklis, H.D. & Harnad, S. (1976) From hand to mouth: Some critical stages in the evolution of language. Annals of the New York Academy of Science, 280, 445-455

Vauclair, J. (2004). Lateralization of communicative signals in nonhuman primates and the hypothesis of the gestural origin of language. Interaction Studies, 5(3), 365-386.

Zatorre, R. J., Evans, A. C., Meyer, E., & Gjedde, A. (1992). Lateralization of phonetic and pitch discrimination in speech processing. Science, 256(5058), 846–849.