In our last session of Singapore ReproducibiliTea on November 14th we did not only meet at the Arc at Nanyang Technological University’s (NTU) main campus, but had participants from the Centre for Biomedical Research Transparency and the Brain, Language and Intersensory Perception Lab (BLIP Lab – @BLIPLabNTU) join us virtually from NTU’s second campus at Novena. The aim for this session was to discuss the prevalence of Questionable Research Practices and why they are problematic focusing on the target publications Measuring the Prevalence of Questionable Research Practices With Incentives for Truth Telling by John, Loewenstein and Prelec (2012) and The Nine Circles of Scientific Hell by Neuroskeptic (2012). As we have many researchers from the National Institute of Education (NIE) in our group, we decided to complement these publications with a recent preprint by Makel, Hodges, Cook and Plucker on Questionable and Open Research Practices in Education Research.

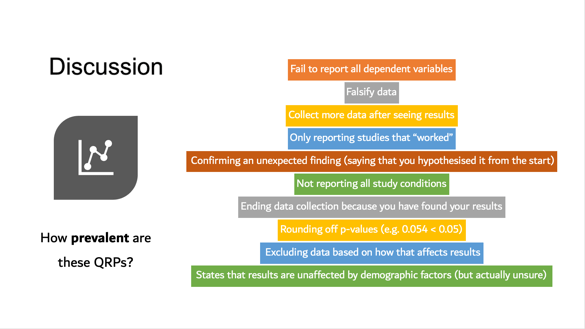

Our discussion leader in this session, Atiqah Azhari from the Social and Affective Neuroscience Lab (SAN Lab – @SANLabNTU) at Nanyang Technological University (NTU), did an amazing job in stimulating our discussion and posing challenging questions to make us reflect on the impact of questionable research practices on the development of scientific knowledge. The interdisciplinary composition of our group made us realize that some research practices seem to range from being appropriate to questionable to clearly wrong depending on the research field and approach they occur in. For instance, in certain situations in qualitative research “ending data collection because you have found your results” would be a recommended practice, while in quantitative research this is considered a questionable research question and often associated with p-hacking practices. Nevertheless, there are certain research practices that we all agreed are clearly wrong and perhaps should not even be called “questionable”, such as for example “falsifying data”.

Another idea that was discussed is that researchers often fall into questionable research practices due to the pressure to publish “compelling stories” with significant results. Many of the questionable research practices mentioned by John, Loewenstein and Prelec (2012) seem to fall into this category of selectively reporting the methods, analyses and results to increase the chances of getting published. Some examples are “failing to report all dependent variables”, “only reporting studies that ‘worked’”, “confirming an unexpected finding (saying that you hypothesized it from the start)”, “not reporting all study conditions” and “excluding data based on how that affects results”. We wondered if one way we could contribute to start a conversation about these issues in our local context could be to invite some of the journal editors of locally run peer reviewed journals to our ReproducibiliTea sessions.

Another problem that we discussed was that in some cases the adjective “questionable” instead of “wrong” or “problematic” research practices might be hindering the community to realize the massive negative impact that these practices have on the development of scientific knowledge and society in general. As Paul Glasziou and Iain Chalmers (2016) explain in a blogpost based on a commentary published in 2009, “85% of all health research is being avoidably “wasted””, which translated into an “annual waste of $170 billion” of the “$200 billion per year spent globally on health and medical research”. In a similar way, in the field of educational research Makel, Hodges, Cook and Plucker (2019) conclude that “questionable research practices can lead to massive mis-investment in educational practices with unrealistic expectations of impact. Implementing educational practice based on faulty research can cause real harm to children and distract from implementing practice based on less-alluring but more reliable and accurate research” (p.23). Hopefully, our Singapore ReproducibiliTea journal club meetings can help increase awareness on the impact of questionable research practices among researchers in Singapore and create opportunities to adopt open science practices.

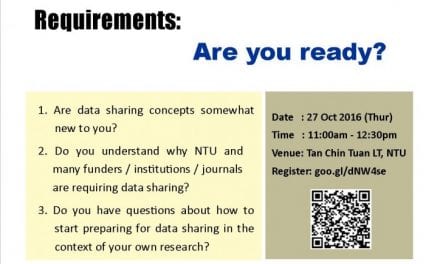

Next week we will be meeting on Thursday 21st of November to discuss Reproducibility Now: Why many studies are not reproducible based on the publication Estimating the reproducibility of psychological science by The Open Science Collaboration (2015). We are very lucky to welcome Giulio Gabrieli from the Social and Affective Neuroscience Lab (San Lab – @SANLabNTU) at NTU as our discussion leader for this upcoming session. So read the paper and come along for Teh Peng, snacks and Open Science chats! We will be waiting for you at:

The Arc – Learning Hub North, TR+18, LHN-01-06

Thursday 21st of November, 1-2pm

You can also join us virtually by contacting alexa.vonhagen@nie.edu.sg and requesting relevant information.